Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right product.

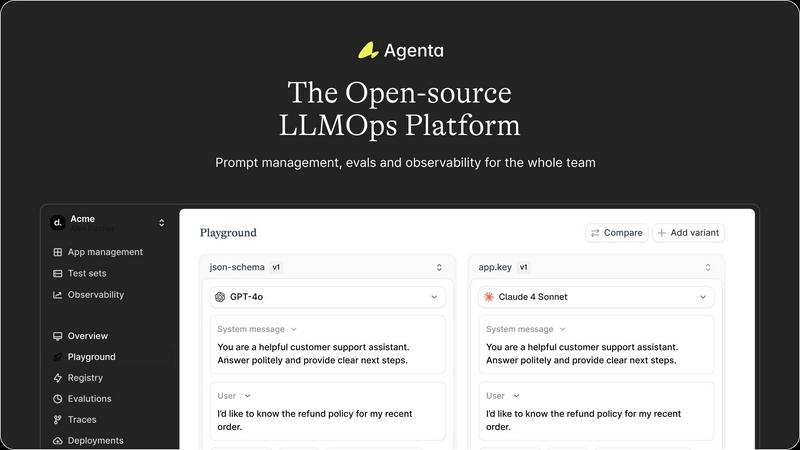

Agenta is the open-source LLMOps platform that helps teams build reliable AI apps together.

Last updated: March 1, 2026

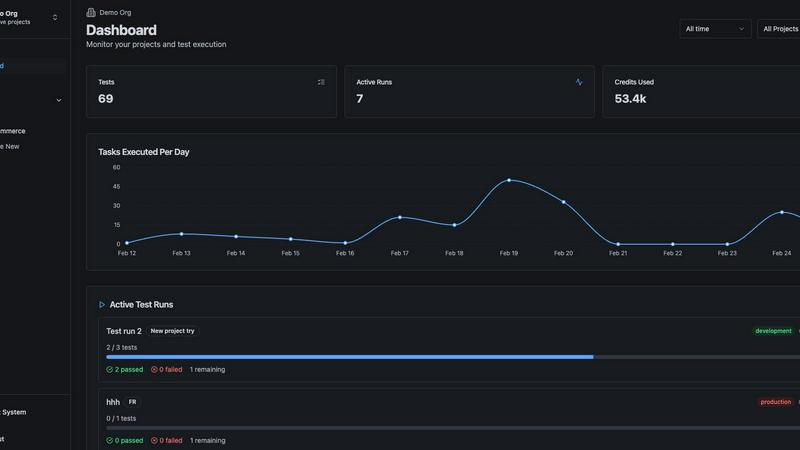

qtrl.ai

qtrl.ai empowers QA teams to scale testing with intelligent automation while maintaining full control and governance.

Last updated: March 4, 2026

Visual Comparison

Agenta

qtrl.ai

Feature Comparison

Agenta

Unified Playground & Experimentation

Agenta provides a centralized playground where teams can iterate on prompts and compare different models side-by-side in real-time. This model-agnostic environment eliminates vendor lock-in, allowing you to use the best model from any provider. With complete version history for every prompt change, teams can track iterations, revert if needed, and maintain a clear audit trail of their development process, turning chaotic experimentation into a structured workflow.

Automated & Comprehensive Evaluation

Move beyond vibe checks with Agenta's systematic evaluation framework. It enables you to create a rigorous process to run experiments, track results, and validate every change before deployment. The platform supports any evaluator, including LLM-as-a-judge, custom code, and built-in metrics. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, and seamlessly integrate human feedback from domain experts into the evaluation workflow.

Production Observability & Debugging

Gain deep visibility into your live LLM applications with comprehensive tracing. Agenta captures every request, allowing you to pinpoint exact failure points when things go wrong. You can annotate traces with your team or gather feedback directly from end-users. A powerful feature lets you turn any problematic production trace into a test case with a single click, closing the feedback loop and enabling continuous improvement based on real-world data.

Cross-Functional Collaboration Hub

Agenta breaks down silos by bringing product managers, domain experts, and developers into one unified workflow. It provides a safe, UI-based environment for non-technical experts to edit and experiment with prompts without touching code. Everyone can run evaluations, compare experiments, and contribute to the development process directly from the UI, while full API and UI parity ensures seamless integration between programmatic and manual workflows.

qtrl.ai

Autonomous QA Agents

qtrl.ai offers autonomous QA agents that can execute instructions on demand or continuously across multiple environments. These agents operate under user-defined rules and perform real browser execution instead of simulations, ensuring the authenticity of test results and user experiences.

Enterprise-Grade Test Management

With a robust centralized platform for managing test cases, plans, and runs, qtrl.ai provides full traceability and audit trails. This feature supports both manual and automated workflows, making it ideal for organizations that prioritize compliance and auditability in their testing processes.

Progressive Automation

The platform allows users to start with human-written instructions and gradually transition to AI-generated tests. qtrl.ai intelligently suggests new tests based on coverage analysis, enabling teams to review, approve, and refine test cases at each stage of automation.

Adaptive Memory

qtrl.ai's adaptive memory feature builds a dynamic knowledge base of the application, learning from test execution, exploration, and encountered issues. This results in smarter, context-aware test generation that becomes increasingly effective with every interaction, enhancing overall testing efficiency.

Use Cases

Agenta

Scaling Prototypes to Production

Teams with a working LLM prototype often struggle with the "last mile" to a reliable, scalable product. Agenta provides the structured workflow needed to systematically test, evaluate, and monitor changes. It replaces ad-hoc deployments with evidence-based releases, ensuring that performance improvements are real and regressions are caught early, dramatically increasing the success rate of launching AI features.

Centralizing Dispersed Prompt Management

When prompts are scattered across Slack, Google Sheets, and emails, consistency and version control are impossible. Agenta serves as the single source of truth for all prompt versions and configurations. This centralization prevents drift, allows for easy rollback, and ensures every team member is always working with the latest, approved iteration, eliminating costly errors and miscommunication.

Implementing Rigorous Evaluation Frameworks

For teams relying on manual "vibe testing," Agenta introduces a data-driven evaluation culture. You can build automated test suites that run against every proposed change, using LLM judges, code-based checks, and human-in-the-loop feedback. This creates a systematic gatekeeping process for production, building confidence that new prompts or model configurations actually improve key metrics before they impact users.

Debugging Complex Agentic Workflows

Debugging a failing LLM agent with multiple reasoning steps is notoriously difficult. Agenta's full-trace observability allows developers to see every intermediate step, input, and output. When an error occurs, engineers can drill down to the exact API call or reasoning step that failed, dramatically reducing mean-time-to-resolution (MTTR) and turning debugging from guesswork into a precise science.

qtrl.ai

Product-Led Engineering Teams

Product-led engineering teams can leverage qtrl.ai to enhance their testing capabilities, enabling them to ship higher-quality software faster without sacrificing control over their QA processes.

QA Teams Scaling Beyond Manual Testing

As QA teams move away from manual testing, qtrl.ai provides the necessary tools to manage complex testing requirements efficiently, facilitating a smooth transition to automated workflows while maintaining oversight.

Companies Modernizing Legacy QA Workflows

Organizations looking to modernize their outdated QA practices can utilize qtrl.ai to integrate advanced automation and AI-driven insights into their existing processes, fostering a culture of continuous improvement and innovation.

Enterprises Requiring Governance and Traceability

For enterprises with strict compliance needs, qtrl.ai offers robust governance features that ensure full visibility and traceability of testing activities, helping them meet regulatory requirements with confidence.

Overview

About Agenta

Agenta is the open-source LLMOps platform engineered to transform how AI teams build and scale. It directly tackles the core chaos of modern AI development, where prompts are scattered across communication tools, teams operate in silos, and deployment is a leap of faith. Agenta provides the essential infrastructure to implement a structured, collaborative, and evidence-based workflow, serving as the single source of truth for developers, product managers, and subject matter experts. It is built for teams serious about moving fast without breaking things, enabling them to iterate smarter, validate thoroughly, and scale their LLM applications efficiently from fragile prototypes to robust, production-grade systems. By centralizing prompt management, automated evaluation, and comprehensive observability, Agenta empowers teams to replace guesswork with data-driven decisions, debug with precision, and ship reliable AI features with confidence.

About qtrl.ai

qtrl.ai is an innovative quality assurance (QA) platform designed to empower software teams by scaling their testing processes while maintaining strict control and governance. It seamlessly integrates enterprise-grade test management with advanced AI automation to create a centralized hub for organizing test cases, planning test runs, and tracking quality metrics through real-time dashboards. This structured foundation provides unparalleled visibility into testing activities, ensuring that engineering leads and QA managers can easily identify what has been tested, what is passing, and where potential risks may lie.

What sets qtrl.ai apart is its progressive approach to AI integration. Rather than imposing an abrupt, "black-box" AI-first methodology, qtrl.ai allows teams to gradually adopt intelligent automation. This flexibility enables teams to begin with straightforward manual test management before transitioning to sophisticated autonomous agents. These agents can generate UI tests from simple plain English descriptions, adapt tests as applications evolve, and execute them across various browsers and environments at scale. This makes qtrl.ai an ideal solution for product-led engineering teams, QA groups moving beyond manual testing, organizations modernizing outdated workflows, and enterprises that require rigorous compliance and audit trails. Ultimately, qtrl.ai aims to bridge the gap between the slow pace of manual testing and the complexity of traditional automation, providing a reliable pathway to faster and more intelligent quality assurance.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is a fully open-source platform. You can dive into the code on GitHub, contribute to the project, and self-host the entire platform. This ensures transparency, avoids vendor lock-in, and allows for deep customization to fit your specific infrastructure and workflow needs.

How does Agenta handle collaboration for non-technical team members?

Agenta features a dedicated, user-friendly web interface that allows product managers and domain experts to participate directly in the LLM development lifecycle. They can safely edit prompts in a visual playground, set up and view evaluation results, and provide feedback on traces without writing a single line of code, fostering true cross-functional collaboration.

Can I use Agenta with my existing tech stack?

Absolutely. Agenta is designed to be framework and model-agnostic. It seamlessly integrates with popular frameworks like LangChain and LlamaIndex, and can work with models from any provider, including OpenAI, Anthropic, Azure, and open-source models. It complements your existing tools rather than forcing a replacement.

What is the difference between evaluation and observability in Agenta?

Evaluation in Agenta refers to the systematic, often automated, testing of LLM variants against predefined metrics and test sets before deployment. Observability is about monitoring live, production systems, capturing traces, and gathering real-user feedback to detect issues and regressions. Agenta connects both: a production issue (observability) can instantly become a test case (evaluation), closing the loop.

qtrl.ai FAQ

What makes qtrl.ai different from traditional QA tools?

qtrl.ai combines robust test management with a progressive AI automation approach, allowing teams to gradually integrate intelligent testing solutions without losing control. This contrasts sharply with traditional tools that often impose rigid automation practices.

Can teams start with manual testing before adopting automation?

Yes, qtrl.ai is designed for progression. Teams can begin with manual test management and then transition to automation at their own pace, ensuring a tailored approach that fits their specific needs and readiness.

How does qtrl.ai ensure the security of test execution?

qtrl.ai prioritizes security by allowing for permissioned autonomy levels and ensuring that sensitive information, such as encrypted secrets, is never exposed to AI agents during test executions.

Is qtrl.ai suitable for small teams as well as large enterprises?

Absolutely. qtrl.ai is built to scale, making it suitable for both small QA teams and large enterprises. Its flexible architecture allows organizations of all sizes to enhance their QA processes while maintaining control and oversight.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to help teams build and scale reliable AI applications. It belongs to the rapidly evolving category of tools focused on managing the lifecycle of large language models, from experimentation to production. Teams often explore alternatives for various strategic reasons. These can include specific budget constraints, the need for different feature sets like deeper MLOps integration, or a requirement for a fully managed service versus an open-source framework. The right fit depends heavily on a team's existing tech stack, in-house expertise, and growth trajectory. When evaluating options, consider your core needs: a collaborative workflow for cross-functional teams, robust evaluation and testing capabilities to ensure quality, and comprehensive observability to debug and improve systems. The goal is to find a platform that provides structure without sacrificing the agility needed to innovate quickly in the AI space.

qtrl.ai Alternatives

qtrl.ai is an innovative platform designed for quality assurance (QA) teams, specializing in scaling testing through AI agents while maintaining control and governance. By integrating robust test management with advanced AI automation, qtrl.ai offers a centralized hub for organizing test cases and tracking quality metrics, ensuring transparency in testing processes. Users often seek alternatives to qtrl.ai for various reasons, including pricing structures, feature sets, and platform compatibility. When evaluating alternatives, it's essential to consider factors such as ease of use, the adaptability of automation features, integration capabilities, and overall support for the specific needs of your QA team. A thorough assessment of these elements can help identify the right solution.