AISeedance2 vs ltx2.site

Side-by-side comparison to help you choose the right product.

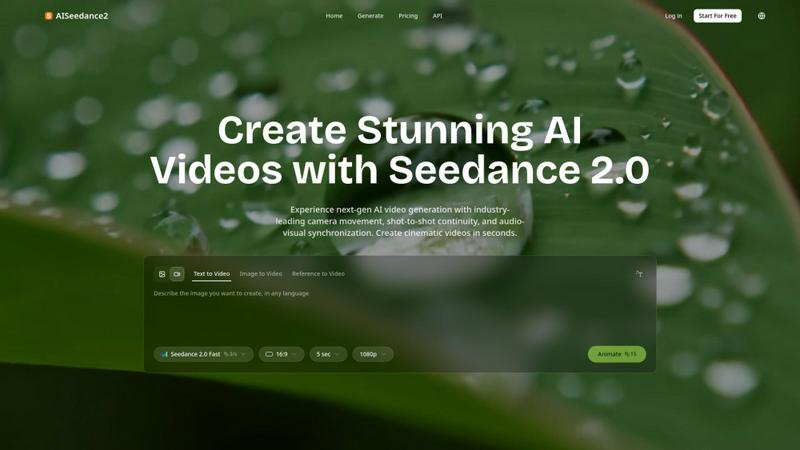

Seedance 2.0 is the most powerful AI video generator, creating cinematic videos from text or images instantly.

Last updated: February 27, 2026

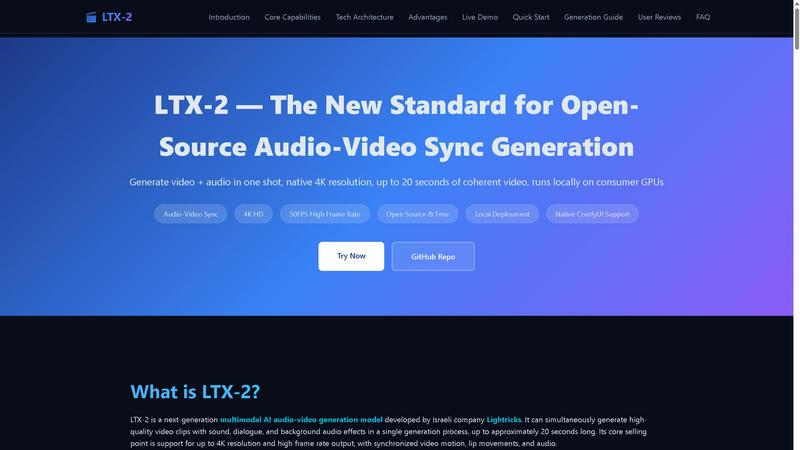

LTX-2 generates synchronized 4K video and audio locally, scaling your creative production instantly.

Last updated: February 28, 2026

Visual Comparison

AISeedance2

ltx2.site

Feature Comparison

AISeedance2

Wide-Range Cinematic Camera Movement

This feature provides the most advanced AI camera control in the industry, executing large-scale, fluid movements that rival professional cinematography. It generates sweeping crane shots, dynamic tracking sequences, orbital movements, and drone-like aerial perspectives from a single text prompt. The AI understands cinematic motion to create smooth camera paths with accurate depth-of-field transitions and dramatic push-in/pull-out sequences, enabling complex camera choreography other models cannot achieve.

Shot-to-Shot Continuity

AISeedance2 maintains perfect visual coherence and narrative flow across different scenes and shots within a video. This ensures characters, settings, lighting, and style remain consistent from one clip to the next, which is essential for producing professional multi-shot narratives like short films or commercials. This continuity eliminates the jarring inconsistencies common in AI-generated video, resulting in a polished, cohesive final product.

Precision Audio-Visual Synchronization

The platform expertly synchronizes visual elements with audio tracks, ensuring every on-screen action, scene transition, and character movement matches the beat, rhythm, and mood of the soundtrack. This creates a deeply immersive viewing experience, crucial for music videos, animated explainers, and any content where timing and emotional impact are driven by audio cues.

Character Identity Lock

This capability ensures that characters maintain their unique facial features, clothing, body type, and style consistently throughout a video, even across different scenes, angles, and actions. It is vital for storytelling, allowing viewers to follow the same character through a narrative without visual discrepancies, which is a common challenge in AI video generation.

ltx2.site

Unified Audio-Video Generation

LTX-2's groundbreaking capability is generating video and perfectly synchronized audio in a single, cohesive diffusion process. This eliminates the need for separate audio generation, tedious dubbing, and manual timeline alignment in post-production. The model inherently learns the physical correspondence between actions and sounds, ensuring character lip movements match dialogue, footsteps align with walking sequences, and background music rhythmically coordinates with on-screen action. This one-shot generation is a massive efficiency gain for professional workflows.

Native 4K Resolution & High Frame Rate

The model is architected to deliver professional-grade output specifications natively, supporting up to 4096x2160 (4K) resolution and approximately 50 frames per second. This high-resolution, high-frame-rate output is suitable for short films and commercial content, providing outstanding detail and lighting performance. Users can leverage the output directly for professional editing without the need for additional upscaling or frame interpolation, saving significant time and computational resources in the production pipeline.

Extended Coherent Duration

LTX-2 generates up to approximately 20 seconds of continuous, coherent audio-video clips in a single inference. It emphasizes maintaining a consistent visual style across all frames, dramatically reducing common AI video artifacts like flicker and structural collapse. This extended, stable duration makes the model uniquely suited for creating complete narrative shots, scenes with intentional camera movement, and other use cases that require temporal consistency beyond short, clip-based animations.

Open-Source & Locally Deployable

As a fully open-source model, LTX-2 provides complete transparency and freedom from vendor lock-in. It features deep GPU optimization for mainstream NVIDIA hardware, enabling local deployment on high-VRAM consumer graphics cards. This architecture offers inference efficiency several times higher than previous models and reduces computational cost by approximately 50%. Local deployment eliminates dependence on cloud services, giving users full control over their data, scalability, and operational costs.

Use Cases

AISeedance2

Short Film & Narrative Production

Independent filmmakers and content studios can leverage AISeedance2 to rapidly prototype and produce short films. The tool's shot continuity, character lock, and cinematic camera movement allow for the creation of compelling narratives with professional visual flow, significantly reducing production time and costs while enabling creative experimentation at scale.

Marketing & Advertising Creative

Marketing teams can generate high-impact video ads, product demos, and social media content quickly. The ability to produce 2K cinematic visuals with precise audio sync and dynamic camera work helps brands create engaging, polished creatives that capture attention and drive conversions without the need for expensive video shoots.

Educational & Explainer Content

Educators and course creators can transform complex information into engaging animated explainer videos. The audio-visual synchronization feature is perfect for matching visuals to voiceovers, while character consistency helps in creating recurring instructor avatars, making educational content more relatable and easier to follow for learners.

Dynamic Social Media Production

Content creators and influencers can produce a high volume of trendy, visually stunning clips for platforms like TikTok, Instagram Reels, and YouTube Shorts. The fast generation speed and tools like "Seedance 2.0 Fast" mode allow for rapid iteration on viral trends, creating professional-grade content that stands out in crowded social feeds.

ltx2.site

Rapid Prototyping for Film & Animation

Independent filmmakers and animation studios can use LTX-2 to rapidly prototype scenes, visualize storyboards, and create animatics with synchronized sound. This allows for quick iteration on creative concepts, testing of narrative flow, and presentation of high-fidelity proofs-of-concept to stakeholders long before committing to expensive traditional production pipelines, dramatically accelerating pre-production.

Scalable Social Media & Marketing Content

Marketing teams and content creators can leverage LTX-2 to produce a high volume of engaging, professional-quality short-form video content for platforms like TikTok, Instagram Reels, and YouTube Shorts. The ability to generate unique, synchronized audio-video clips on-demand enables consistent content calendars, A/B testing of concepts, and personalized video ads at a scale previously impossible without large budgets.

Development of Interactive Media Applications

Developers and startups can integrate LTX-2 as a core engine for building next-generation interactive applications. This includes tools for dynamic video game asset creation, personalized video messaging platforms, AI-powered video editing suites, and immersive experiential marketing. The local deployment capability ensures these applications can be scaled reliably and cost-effectively.

Educational & Training Material Production

Educators and corporate training departments can efficiently produce high-quality instructional videos and simulations. LTX-2 can generate clear visual demonstrations accompanied by perfectly timed narration and sound effects, making complex topics more engaging and easier to understand. This reduces the barrier to creating compelling educational content in-house.

Overview

About AISeedance2

AISeedance2, also known as Seedance 2.0, is the most powerful AI video generator available today, redefining the landscape of AI filmmaking for creators, marketers, educators, and production teams. Developed by ByteDance, this next-generation platform empowers users to transform simple text prompts, images, or reference videos into stunning, cinematic-quality content in seconds. Its core value proposition lies in delivering professional-grade video production capabilities that were previously inaccessible without extensive technical expertise and resources. AISeedance2 is engineered for those looking to scale their video content creation rapidly, offering industry-first breakthroughs that ensure every output is cohesive, dynamic, and visually compelling. By combining advanced AI with an intuitive interface, it removes traditional barriers, enabling users to produce everything from short films and marketing creatives to educational content and social media clips with unprecedented speed and quality, ultimately driving growth and engagement for modern digital ventures.

About ltx2.site

LTX-2 by Lightricks is a foundational, open-source multimodal AI model that is fundamentally reshaping the landscape of creative video generation. It empowers creators, developers, and forward-thinking businesses to generate synchronized, high-fidelity 4K video and audio in a single, streamlined process. This tool is engineered for innovators who demand professional-grade cinematic output without the traditional bottlenecks of cloud subscriptions, API costs, or complex, multi-stage post-production pipelines. Its core value proposition is delivering up to 20 seconds of coherent, high-frame-rate video where every element—character lip movements, action sound effects, and background music—is perfectly aligned with the visual narrative from the very first frame. By championing native 4K resolution and offering seamless integration with popular node-based workflow tools like ComfyUI, LTX-2 transcends being just another AI tool. It is a scalable, foundational technology that places studio-quality audio-video generation directly into the hands of users. This enables rapid prototyping, high-volume content creation, and the development of next-generation media applications, all deployable locally on consumer-grade hardware. It represents a paradigm shift towards democratizing high-end media production.

Frequently Asked Questions

AISeedance2 FAQ

What makes AISeedance2 different from other AI video generators?

AISeedance2 sets itself apart with three industry-first, breakthrough capabilities: wide-range cinematic camera movement for professional shots, shot-to-shot continuity for seamless scene transitions, and precision audio-visual synchronization. Combined with features like 2K resolution and character identity lock, it offers a more complete and professional filmmaking toolkit that produces higher-quality, more cohesive multi-shot videos.

What kind of videos can I create with AISeedance2?

You can create a wide variety of video content, including short films, marketing and advertising creatives, educational explainers, social media clips, action sequences, and character-driven narratives. The platform supports text-to-video, image-to-video, and reference-to-video generation, providing flexibility for different creative workflows and project needs.

How does the Character Identity Lock feature work?

The Character Identity Lock feature uses advanced AI models to recognize and consistently replicate a specific character's defining attributes—such as facial features, hairstyle, clothing, and body type—across every frame and scene of your generated video. This ensures your main subject remains visually constant, which is essential for coherent storytelling and professional-quality output.

Is AISeedance2 suitable for beginners with no video editing experience?

Yes, AISeedance2 is designed with an intuitive interface that simplifies professional video creation. Users can start by simply typing a text prompt. The AI handles the complex aspects like cinematography, continuity, and sync. This makes it accessible for beginners while still providing the advanced features that professional creators need to scale their production.

ltx2.site FAQ

What hardware do I need to run LTX-2 locally?

To run LTX-2 effectively, you will need a consumer-grade NVIDIA GPU with substantial Video RAM (VRAM). The model is deeply optimized for NVIDIA architecture. For generating 4K video, a high-VRAM GPU (such as models with 16GB+ VRAM) is recommended, especially when using the official NVFP4/NVFP8 low-precision weights to manage computational load and enable high-resolution output on capable consumer hardware.

How does LTX-2 synchronize audio and video?

LTX-2 uses a multimodal diffusion architecture that jointly models the temporal (video motion), spatial (visual content), and acoustic (audio waveform) dimensions within a single neural network. During its training on vast datasets, the model learns the inherent physical and semantic relationships between actions and sounds—like the correlation between a mouth shape and a phoneme or a door moving and a creaking sound—allowing it to generate both modalities in a synchronized manner from a unified latent space.

Can I control the content of the generated video?

Yes, LTX-2 offers flexible control over the generation process. Primary control is achieved through detailed text prompts that describe the scene, action, and desired audio. The model also supports conditioning inputs like images or sketches to guide the visual composition. Furthermore, when integrated into workflow tools like ComfyUI, users can access different operational modes (e.g., Fast, Pro, Ultra) to balance generation speed and output quality.

Is LTX-2 completely free to use?

Yes, LTX-2 is released as a fully open-source model. You can download the model weights, run it on your own hardware, and integrate it into your projects without any licensing fees or subscription costs from Lightricks. This aligns with its core philosophy of democratizing access to high-end audio-video generation technology. You are only responsible for the cost of your own computational resources.

Alternatives

AISeedance2 Alternatives

AISeedance2 is a next-generation, web-based AI video generator that transforms text and images into dynamic videos. It operates in the competitive AI video creation category, empowering creators and teams to produce cinematic content with advanced features like camera movement and character consistency. Users often explore alternatives for various reasons, including budget constraints, specific feature requirements, or different platform integrations. Finding the right fit depends on your project scale, desired output quality, and workflow needs. When evaluating other options, consider core capabilities like resolution, motion control, and audio sync. Prioritize tools that align with your content goals, whether for rapid social clips or high-fidelity narrative projects, ensuring they can scale with your creative ambitions.

ltx2.site Alternatives

LTX-2.site is the home for LTX-2, a revolutionary open-source AI model for unified audio-video generation. It belongs to the cutting-edge category of multimodal creative tools that produce synchronized 4K video and sound in a single, local process. Even with its powerful capabilities, users explore alternatives for various scaling needs. Some require different deployment models, specific feature integrations, or commercial licensing options that fit their unique production pipeline and growth trajectory. When evaluating other solutions, focus on core differentiators. Key considerations include output quality and resolution, the seamlessness of audio-video synchronization, deployment flexibility, and the total cost of ownership. The right tool should align with your technical requirements and creative ambition.