Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Hostim.dev

Hostim.dev deploys Docker apps with built-in databases on simple, fast EU hosting.

Last updated: March 1, 2026

OpenMark AI instantly benchmarks over 100 LLMs on your exact task for cost, speed, and quality with no setup or API keys.

Last updated: March 26, 2026

Visual Comparison

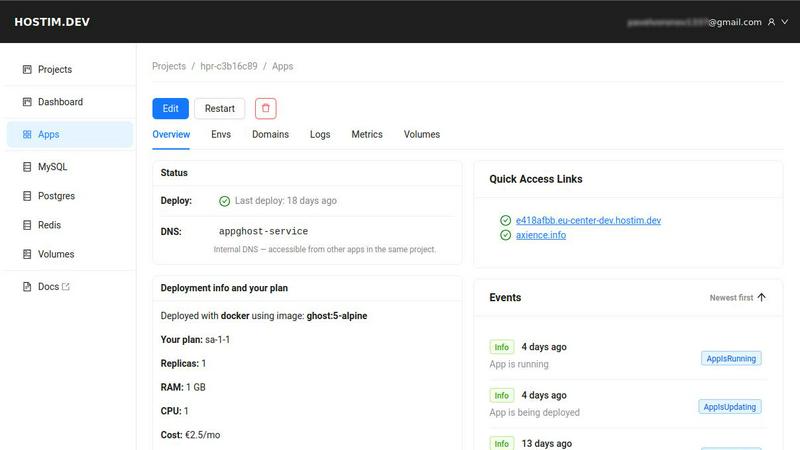

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Deploy with Docker, Git, or Compose

Launch your application in minutes using the tools you already know. Simply paste your existing Docker Compose file, point to a Git repository, or push a Docker image. There's no need to rewrite your application or learn a new platform-specific configuration, ensuring zero lock-in and maximum developer velocity from day one.

Built-in Managed Databases & Storage

Instantly provision production-ready MySQL, PostgreSQL, and Redis databases alongside persistent storage volumes directly from the dashboard. These services are automatically connected to your application with pre-wired environment variables, eliminating hours of manual setup and configuration for a fully integrated backend stack.

EU Bare-Metal Performance & Compliance

Your projects run on dedicated, high-performance bare-metal servers located in Germany. This guarantees superior speed and resource availability compared to virtualized cloud instances. By hosting in the EU, every project is GDPR compliant by default, a critical requirement for serving European customers.

Transparent Per-Project Billing

Gain complete financial clarity with cost tracking isolated per project. See real-time, hourly usage and receive predictable, flat monthly rates starting from €2.5. This model is perfect for freelancers and agencies who need to quote clients accurately, with no hidden fees or surprise bills.

OpenMark AI

Plain Language Task Description

Simply describe the task you want to benchmark in natural language, without writing complex code or intricate prompt engineering. The platform's intuitive editor allows you to define your exact use case, from creative writing and translation to complex RAG and agentic workflows, making advanced benchmarking accessible to the entire product team.

Multi-Model Comparison in One Session

Run your identical prompt against dozens of leading models from providers like OpenAI, Anthropic, and Google simultaneously. This side-by-side testing eliminates the tedious process of manual, sequential API calls, delivering a unified results dashboard where you can directly compare performance, cost, and quality metrics head-to-head in minutes.

Real Cost & Stability Analysis

See the true cost per API request for your specific task and, more importantly, analyze output stability across multiple runs. OpenMark AI measures variance to show you which models deliver consistent, high-quality results every time, moving beyond a single data point to ensure reliability before you commit to a model for production.

Hosted Benchmarking with Credits

Skip the hassle of sourcing and configuring individual API keys for every model you want to test. The platform operates on a credit system, providing direct access to its extensive model catalog. This streamlined approach dramatically reduces setup time and operational overhead, letting you focus purely on evaluation and decision-making.

Use Cases

Hostim.dev

Rapid Prototyping for Startups

Quickly validate your SaaS idea by deploying a full-stack prototype in minutes. With built-in databases and one-click templates for frameworks like Express.js and Django, you can focus on building features instead of managing infrastructure, accelerating your path to market and user feedback.

Client Project Management for Agencies

Deliver and hand over projects cleanly by isolating each client's application in its own dedicated project with separate billing. This provides clear cost breakdowns, secure environments, and easy transfer of ownership, streamlining agency workflows and client relationships.

Learning and Portfolio Building for Developers

Students and developers can learn Docker, databases, and deployment on real, production-grade infrastructure. The free trial and student credits allow for hands-on experience building portfolio projects that demonstrate real-world skills, not just local development.

Scalable SaaS Application Foundation

Build your scalable software-as-a-service with a foundation that grows with you. Start simple and seamlessly add resources, databases, and services through the UI as your user base expands, all without re-architecting your deployment or managing Kubernetes clusters.

OpenMark AI

Pre-Deployment Model Validation

Before shipping a new AI feature, product teams can rigorously test candidate models on their actual task. This validates which model delivers the required quality at an acceptable cost and latency, de-risking development and preventing costly post-launch model switches.

Cost-Efficiency Optimization for Scaling Applications

For applications generating high volumes of AI calls, finding the most cost-effective model is critical. Developers use OpenMark to benchmark models not just on headline token price, but on the real cost-to-quality ratio for their workload, optimizing operational expenses as user demand grows.

Ensuring Output Consistency and Reliability

When building features where predictable performance is non-negotiable—like data extraction or classification—teams benchmark models across multiple runs. This identifies which providers offer stable, low-variance outputs, ensuring end-users have a reliable and consistent experience.

Rapid Prototyping and Model Selection

During the ideation phase, developers can quickly test which LLMs are best suited for a new concept. By describing the prototype task, they can get immediate feedback on quality and capability across the model landscape, accelerating the initial design and technical feasibility assessment.

Overview

About Hostim.dev

Hostim.dev is a revolutionary bare-metal Platform-as-a-Service (PaaS) built for developers who want to ship software fast, without sacrificing control or performance. It's engineered to eliminate the traditional DevOps bottleneck, allowing you to go from code to a live, production-ready application in minutes. The platform is your ultimate launchpad for growth, designed to seamlessly scale your project from a simple prototype to a robust, multi-service SaaS without you ever needing to manage underlying servers, networking, or complex YAML configurations. You maintain full container portability by deploying directly from your existing Docker images, Git repositories, or complete Docker Compose files with zero rewrites. Hostim.dev automatically provisions and wires together your entire stack: managed MySQL, PostgreSQL, and Redis databases, persistent storage volumes, automatic HTTPS, and secure internal networking. Every project runs in its own isolated environment on high-performance bare-metal servers in Germany, ensuring GDPR compliance by default and top-tier EU performance. With transparent, per-project hourly billing and real-time cost tracking, you get predictable, flat pricing—free from the surprise bills of big cloud ecosystems. It's the ideal platform for ambitious freelancers, agile startups, forward-thinking agencies, and SaaS builders who prioritize speed, simplicity, and scaling with confidence.

About OpenMark AI

OpenMark AI is the definitive platform for task-level LLM benchmarking, built to eliminate the guesswork from choosing AI models. It's a web application where developers and product teams describe their specific task in plain language—like data extraction, classification, or agent routing—and run comprehensive benchmarks against a vast catalog of over 100 models in a single session. The platform delivers side-by-side comparisons of real API performance, moving beyond theoretical datasheets to show you actual cost per request, latency, scored output quality, and critical stability metrics across repeat runs. This focus on variance reveals consistency, not just a single lucky output, ensuring your AI feature is built on a reliable foundation. By using a hosted credit system, OpenMark AI removes the friction of configuring and managing multiple API keys, accelerating your pre-deployment validation. It's designed for teams who prioritize cost efficiency and performance at scale, helping you ship with confidence by pinpointing the optimal model for your unique workflow and budget.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

Hostim.dev offers a 5-day free trial project with no credit card required. This trial includes full access to the platform to deploy your application using Docker, Git, or Compose, and utilizes the included free tiers for managed databases to test your stack completely.

Can I deploy with just a Docker Compose file?

Absolutely. You can paste your existing Docker Compose file directly into the Hostim.dev dashboard and deploy your multi-service application instantly. The platform handles all the underlying orchestration, requiring no modifications to your standard Compose configuration.

Where is my application hosted?

All applications are hosted on high-performance bare-metal servers in German data centers within the European Union. This ensures GDPR compliance by default and provides low-latency performance for your European user base.

Do I need to know Kubernetes or DevOps?

No, you do not. Hostim.dev abstracts away all the complexity of Kubernetes, server management, and networking. You get the power and isolation of Kubernetes namespaces without ever having to write or manage a single line of YAML configuration.

OpenMark AI FAQ

How does OpenMark AI differ from standard model leaderboards?

Standard leaderboards use fixed, generalized datasets (like MMLU) that may not reflect your specific use case. OpenMark AI performs benchmarking with your prompts and tasks, providing real API cost, latency, and stability data from models running your exact workload, giving you actionable insights for your product.

Do I need my own API keys to use OpenMark AI?

No. OpenMark AI uses a hosted credit system. You purchase credits and the platform manages API access to its extensive catalog of models. This eliminates the need to sign up for and configure multiple provider accounts and keys, streamlining the entire benchmarking process.

What does "stability" or "variance" testing mean?

When you run a benchmark, OpenMark AI can execute your task multiple times with the same model. Stability analysis shows how much the outputs and scores vary across these runs. A model with low variance is more predictable and reliable for production, whereas high variance indicates inconsistent performance.

What kind of tasks can I benchmark on OpenMark AI?

You can benchmark virtually any LLM task. Common examples include text classification, summarization, translation, creative writing, code generation, data extraction, question answering, complex reasoning, and evaluating components of RAG pipelines or multi-agent systems. Describe your task in plain language to get started.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a modern, bare-metal PaaS designed for developers to deploy Docker applications with integrated databases on high-performance EU hosting. It streamlines the journey from code to production by handling infrastructure, networking, and managed services, allowing builders to focus purely on their application. Developers explore alternatives for various reasons, such as needing a different geographic region, specific pricing models, or advanced platform features beyond their current scope. The search often stems from a project's evolving requirements around scale, compliance, or cost predictability. When evaluating other platforms, key considerations include deployment flexibility, the simplicity of adding managed services, infrastructure transparency, and billing clarity. The ideal solution should match your technical stack, growth trajectory, and operational philosophy without locking you into a proprietary ecosystem.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level LLM benchmarking, helping teams choose the right model before shipping an AI feature. Users often explore alternatives to find a solution that better fits their specific budget, required feature set, or integration needs with their existing platform. When evaluating other options, it's crucial to look beyond basic model lists. Consider whether the tool provides real, side-by-side performance data from actual API calls, measures stability across multiple runs, and calculates true cost efficiency for your specific task, not just token price. The goal is to find a benchmarking solution that delivers actionable insights for pre-deployment validation. This means seeing variance in outputs, understanding latency trade-offs, and getting a clear picture of which model offers the best quality relative to its cost for your unique workflow.